How long does it take to use OS X’s FileMerge on a pair of 25mb files? Somewhere between 20m and 2 hours. I left my computer after 20m since it wasn’t done, but it was when I came back. At this point, I’m convinced I don’t need to worry about using PyPy with Skeinforge, so I can start saving time. Now if I could only quadruple the speed of my ToM…

Tag Archives: Python

Differences in PyPy generated gcode (Updated!)

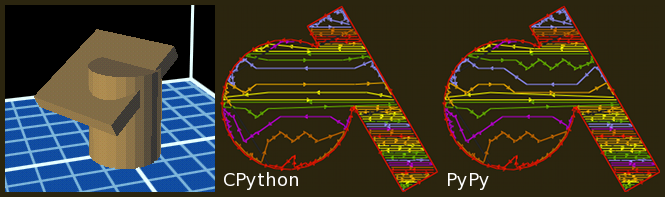

As I said in my last post, the gcode generated when using PyPy instead of CPython can differ, so I’ve been trying to find out when. I already knew that the standard 20mm calibration cube had no differences, and the same seems to be true about a simple cylinder generated with OpenSCAD. So next, I generated a cylinder intersected a box and the differences appeared. It’s in the picture below, but here is the code:

$fn = 25; # So the cylinder is reasonably smooth

union() {

cylinder(30, 12, 12);

translate([5, -5, 25]) {

rotate([0, 30, 30]) {

cube([35, 35, 5], true);

}

}

}

So what’s different?

The first thing I noticed when running a diff between the files is that the bounding boxes for layers can be defined in a slightly different order. For example while CPython will generate this:

(<boundaryPoint> X26.813 Y-12.614 Z16.375 </boundaryPoint>) (<boundaryPoint> X9.313 Y17.697 Z16.375 </boundaryPoint>) (<boundaryPoint> X5.232 Y15.341 Z16.375 </boundaryPoint>) (<boundaryPoint> X22.732 Y-14.969 Z16.375 </boundaryPoint>)

PyPy will put the first line as the fourth. It’s the same bounding box, but it shows up in a diff. More importantly, it also makes different decisions when it comes to fill. My profile is set to a 20% fill, and you can see the differences in the image above. Neither implementation’s output seems to be actually suffer. In the case above (a few layers after the intersection starts), PyPy uses fewer straight lines. But further up on the object CPython put more angles in. Things are never too different though, they are kind of close. Maybe this isn’t something to worry too much about. I’ll have to spot check one of the more complicated objects to see if an obvious problem occurs.

Update:

After posting this I received this email from Carl Friedrich Bolz, one of the PyPy developers:

[I] wanted to point out two of the most likely sources of differences (I’m a PyPy developer):

– dictionary order is not guaranteed between implementations

– CPython probably uses x87 floating point math, whereas PyPy uses SSE2. those two have slightly different rounding behavior, so if the algorithms are not numerically stable, you can get diverging results.if you find out that it’s not one of those too, it might be a bug in PyPy.

The SSE issue was actually one of my theories for what was going on. Rounding on floating point numbers would explain what Skeinforge is doing. On my example layer above, PyPy must have thought it was a hair under on plastic so it added some extra kinks in the fill lines to make up the difference. The dictionary ordering explains the outline coordinates.

I haven’t gotten around to spot checking the larger object, but between my results above and confirmation that PyPy handles floating point number differently… I think I’m going to start using it permanently with Skeinforge. The 4x speedup is really wonderful.

Trying PyPy with ReplicatorG

While I’ve been having a ton of fun with my MakerBot Thing-O-Matic, generating the gcode to run the machine takes an amazingly long. Despite having a quad-core Core2Duo running a hair over 2.5GHz, Skeinforge is not efficient. Python isn’t fast at that much number crunching, and the process that turns the 3D model into a series of 2D layers is almost totally serial.

I’ve been using a program called Pleasent3D to look at the gcode Skeinforge makes to look for errors. While reading about the software, I found a blog entry by the author describing how he got tired of waiting on Skeinforge and then ported the gcode gene ration algorithms to Objective-C and ended up with a massive speedup (up to 230x in one module).

Now I don’t want to get away from the official MakerBot way of doing things yet, I’m not that confident. The easiest way to speed up Skeinforge would be to speed up Python. People used to recommend Psycho, but it is no longer updated and doesn’t run in 64-bit mode (like Python does on my Mac). I finally got around to playing with PyPy, which grew out of Psyscho, to see just how much of a difference it made. I’d seen references to other people doing this, so I knew all the libraries had to be there.

Installing PyPy & TkInter

Installing PyPy was as easy as downloading a pre-built binary distribution and unzipping it. The problem is that to use PyPy with Skeinforge (and therefor ReplicatorG), you need to have TkInter installed. I found a great set of steps to follow in a PyPy blog entry about using the IDLE IDE. The only problem was the TkInter package for PyPy is looking for Tk8.4, but OS X Lion has 8.5. Luckily since it’s a point release I figured I could just swap them out and it worked out fine. I updated the files in the tkinter-pypy install file and it worked fine (updated zip for download). Making ReplicatorG use PyPy is as easy as selecting it in the preferences window.

Benchmarking

So I ran some benchmarks by running a couple of models through with both CPython and PyPy with my normal profile, no support:

| Model | CPython | PyPy | Speed |

|---|---|---|---|

| 20mm calibration cube | 19s | 13s | 1.46x |

| Vica illusion sculpture | 5m 36s | 1m 48s | 3.11x |

| V8 cam shafts | 6m 37s | 1m 18s | 5.09x |

| Tank! | 2h 45m 29s | 38m 45s | 4.27x |

As you can see, PyPy can be dramatically faster. I chose the Tank! model (which unmodified is about 4x the max print area on my ToM) to see just how big the difference would be on very complex models. Getting a 4x speedup is a great result, but there is just one minor problem: the gcode is different. Generating gcode should be deterministic. Given the same profile, the same model, and the same version of Skeinforge you should always get the same output.

In the case of the 20mm calibration cube, the gcode is identical. But for every other model, it’s different. The F parameters on the move commands are different, and sometimes the points seem different. I haven’t looked at it too hard yet. It seems like it might be due to floating point differences, but PyPy is supposed to be a drop in replacement for most Python programs so I doubt this should be happening.

I’m going to have to look into it further.